Here's what actually matters for mid-market companies evaluating technology investments this year, based on what we've seen working with manufacturers, retailers, public agencies, and nonprofit organizations across the Pacific Northwest since 2017. We were recently recognized as one of only 10 "Industry Disrupters" nationwide by the U.S. Chamber of Commerce, selected from over 12,000 applicants. That recognition came from challenging industry norms rather than following them, and this piece reflects that approach.

The numbers in this article come from credible sources: Gartner, McKinsey, MIT, RAND Corporation, and industry research firms. We'll cite specific statistics so you can verify them yourself. If a vendor can't point to similar evidence for their claims on topics like this, that tells you something.

AI Integration: What Works vs. What's Vendor Fantasy

The headline statistic that should shape every AI conversation: 95% of generative AI pilots deliver zero measurable P&L impact. That's from MIT research published in July 2025. This doesn't mean "underperforming expectations" but in this case it means zero impact.

The broader picture is equally sobering. Over 80% of AI projects fail, exactly twice the failure rate of non-AI IT projects, according to a RAND Corporation analysis. The abandonment rate is also accelerating: 42% of companies abandoned most AI initiatives in 2025, up from 17% the previous year. Gartner predicts that over 40% of agentic AI projects will be canceled by 2027 due to escalating costs and unclear business value.

So, when a vendor pitches you "AI-powered features" or suggests you need "AI employees" to handle everyday tasks, the appropriate response should be skepticism. Someone is getting paid to push those subscriptions, and the data suggests you won't see the returns they're promising. This is why there's a massive surge in tools that are essentially all the same, the only differentiator now is who has the budget to punch through the noise to reach you.

Where AI could deliver ROI

MIT's analysis found back-office automation produces the highest returns, while sales and marketing yields the lowest. Specialized vendor solutions succeed 67% of the time versus only 33% for internal builds. AI coding assistants show 376% ROI over three years with payback under six months, according to Forrester. Customer service chatbots deliver 250% ROI and 18% satisfaction improvements per IDC and Microsoft research. This does not mean that employees are being laid off due to AI, this instead translates to higher productivity if the tools are used appropriately under skilled supervision.

The pattern is clear: AI works for specific, bounded tasks where you can measure outcomes. It fails when applied to vague "transformation" goals or used to replace expertise that doesn't already exist in-house. We've literally seen managers and sales personnel use AI to generate emails to employees (cringe), emails to us (more cringe), and send outbound sales to prospects using templated AI language generated by ChatGPT. Just... stop.

How we actually use AI

We think through the projects, tasks, and goals ourselves first. Being that we work with complex development issues, we use AI for automated research, review what it finds, identify gaps, and run additional rounds until we have what we need to make an informed decision. We add those materials to project spaces and engage AI in discussions for synthesis and understanding. Before executing any helper tasks, we force a conversation to ensure concepts are understood and give the AI a chance to ask clarifying questions. We train AI to become an expert in the area we want assistance in and that knowledge is reusable.

That's not glamorous, though. It's not "AI employees" who are autonomously running your business. It's AI as an augmentation tool for skilled professionals who already know their jobs. For example, this firm has been around long before AI and we have professional training in our field, so AI becomes a tool to augment, not replace.

We also use AI for reviewing scores of analytics exports from Google and Matomo to identify trends in recorded behavioral data. We use it for scaffolding and critical document queries, saving hours of manual lookup (e.g., PDF manuals and documentation). We use it for clerical and repetitive tasks too, such as namespacing, PHP syntactical alignment during language version upgrades, Sass restructuring, and even starter frameworks for Vue.js or Slim. Additionally, we conduct QA for Laravel projects, having AI inspect traces to determine classes and objects on unfamiliar codebases. We run security audits to evaluate compromised sites in detail and use it extensively for documenting code changes.

But if you don't already know your job, AI is pointless. You're supervising something you don't understand. Many businesses have employees blindly using AI tools (without operational policies), and there's significant risk in that approach. Internal operating data can be leaked depending on the provider and their security policies. Sometimes manufacturers and developers find themselves having their data processed through AI prompts before being stored for training data. Companies need to know what their employees (and contractors) are doing with its own operational information or they could potentially face contractual or regulatory issues.

Technical Debt: The tax you're probably paying

Here's the number that should concern every mid-market executive: over 70% of IT budgets go to "keeping the lights on," leaving less than 30% for growth and innovation. Some organizations see maintenance consuming 89% of budgets. If you're wondering why technology initiatives keep stalling, this is likely why. A common trend we see is that managers are not budgeting to fix cyclic problems and instead try to keep executives "happy" by putting a band-aid on most issues. What in fact would make executives happy would be to not spend time and money on the same issue year over year, but many managers simply don't know how to frame or even justify that.

The accumulated technical debt across U.S. software projects reached $1.52 trillion in 2022, growing at 14% annually. Technical debt represents 20-40% of the value of an organization's entire technology estate before depreciation, according to McKinsey. Legacy tech upgrades cost the average business $2.9 million in 2023. We're still working with clients who have systems going back to the mid-2000s and sometimes it's difficult to justify development without sweeping upgrades.

This isn't an abstract concept. Developers spend 42% of their time on technical debt and maintenance instead of building features. That's 17.3 hours weekly per developer not moving your business forward. Companies with high technical debt spend 40% more on maintenance and deliver features 25-50% slower than competitors.

How technical debt accumulates

It happens when projects are treated as one-off builds without planning for future migration or maintenance. Unfortunately, this is becoming more of the "norm" with projects, especially higher-end technical builds and government projects when vendors promise "quick implementations" that merely cut corners. When contractors with inconsistent code quality accumulate code that subsequent developers can't maintain, the project suddenly becomes twice as expensive to maintain over time. When "keeping the lights on" consumes so much budget that modernization gets perpetually deferred, the company bleeds money on fixing hot garbage that shouldn't have been approved to begin with.

We experienced this ourselves and it's been a nightmare. After years of working with contractors, and after some growth to allow more administrative oversight, we deliberately audited the actual code quality and associated costs. We found patterns that weren't sustainable: inconsistent work, a compounding maintenance burden, and quality issues that required rework on our dime for the client. So, we've made the decision to reset our team and the organization and now hire US employees only. Now we're rebuilding from a clean foundation.

We're not asking clients to learn lessons we weren't willing to apply to our own operations. These are growing pains that many don't know how to navigate, it can get complicated.

The security dimension

Legacy systems cannot support modern security features well, like multi-factor authentication, AES-256 encryption, or TLS 1.3 protocols. Breaches involving legacy system credentials take 292 days to identify and contain versus 200 days for modern systems.

Breaches identified within 200 days cost 23% less to resolve. For context, 67% of healthcare organizations experienced ransomware attacks in 2024, driven substantially by legacy medical systems. Most issues with legacy systems involve workarounds to common problems, which inherently caused a heightened security risk.

The modernization trap

Here's the uncomfortable reality: 80% of modernization projects fail due to inadequate planning. ERP implementations specifically see 55-75% failure rates, with 64% experiencing budget overruns and 51% causing operational disruptions at go-live. The average ERP project exceeds initial budgets by 300-400% and timelines by 30%.

The solution isn't avoiding modernization. Instead, the solution is to approach these problems differently. We recommend tools and platforms based on professional expertise and actual client needs, not pop-culture trends or client opinions about what technology they think they need. We also plan for migrations from day one, because we want a more open architecture to allow freedom of movement. We scope projects in multiple phases because large-scale projects fail 3-5 times more often than small ones, however that doesn't give managers a license to simply state a particular phase isn't needed; it's part of the plan for a reason, task modularity doesn't provide rights to veto common sense.

Vendor Lock-In: What your software provider isn't telling you

Four times last year, prospects came to us wanting to escape their current web or software situation. The conversation went the same way each time: they'd already spent $30,000 or more with a vendor, realized the platform wasn't serving them, and wanted out. In short, they were sold a false bill of goods that didn't produce any ROI. Our answer was painful but honest: getting out would cost at least as much as they'd already spent, sometimes more. In some cases, contract terms with penalties made walking away even harder. We only had one case that was directly recoverable, but they were lucky.

These industry trends aren't accidental. SaaS vendors structure relationships to maximize lock-in while telling customers they're getting flexibility and value. Sales teams are also very skilled at making things feel believable when they're not. Minimum monthly hours, minimum project costs, predatory termination fees, and shadow leasing of the "property" one believes they own are all pitfalls. When you leave a provider, you don't get to take that property with you.

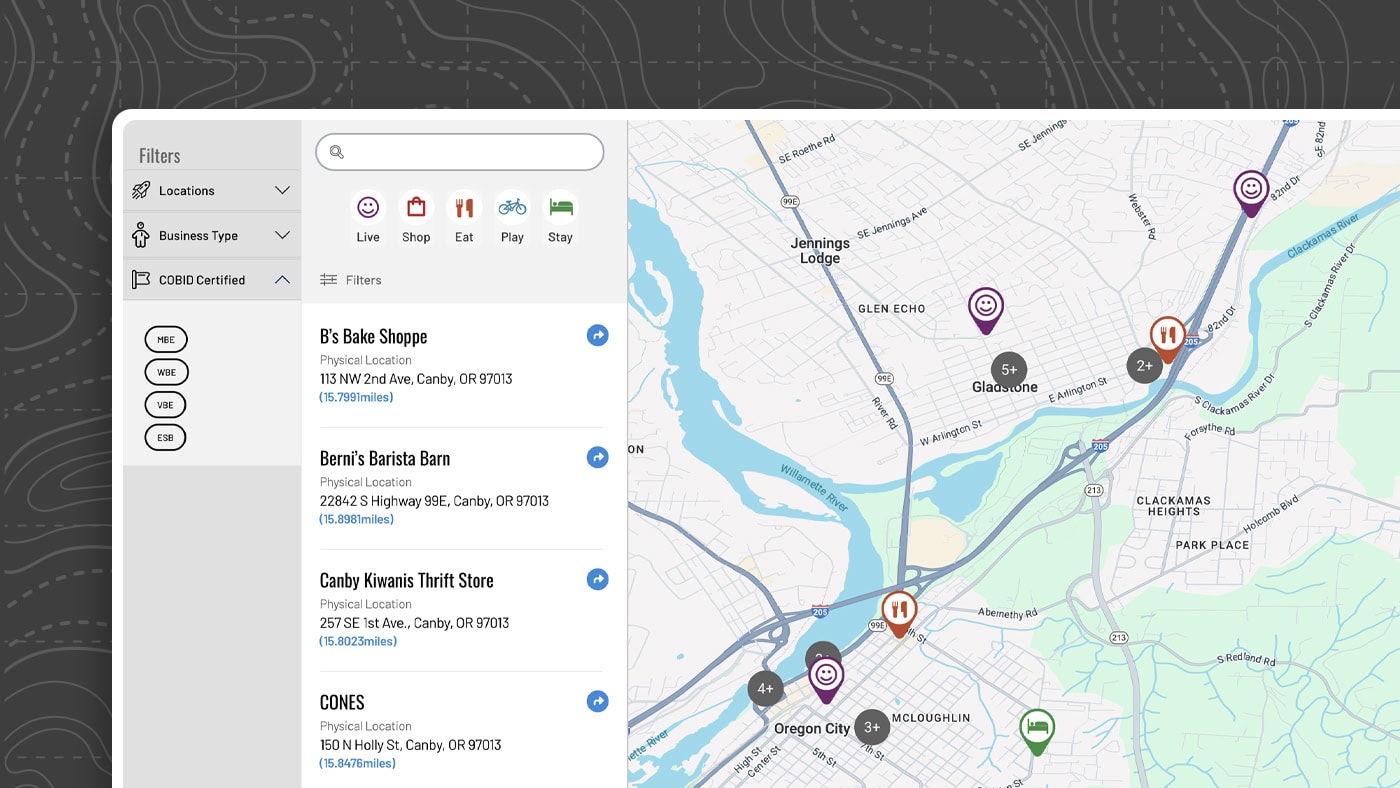

The membership and Chamber sector illustrates the problem more clearly. Legacy SaaS providers charge $8,000-$20,000 annually for platforms that manage directories, maps, events, and member data. These systems often run on outdated architectures disguised as leading edge. The directories and maps they provide frequently lack proper meta tags or schema markup for indexing and reach. Member data gets buried on subdomains where search engines can't properly index it. Vendors control all leads coming through contact forms and have been known to "lose" leads or cherry-pick the valuable ones. We've seen this directly where vendors who host systems for clients collect the lead-gen emails from their own contact forms, so those relationships and communications are not private between the member and their potential customer.

The data sovereignty shift

Enterprise buyers have caught on to this. 93% of large enterprises now prioritize data sovereignty in vendor decisions. The sovereign cloud market is projected to grow from $96.77 billion to $648.87 billion by 2033. Mid-market companies are following the same trajectory.

Third-party involvement in breaches doubled from 15% to 30% in a single year, according to the 2025 Verizon Data Breach Investigations Report. 70% of compliance failures are attributed to managing third-party vendor risks. When your data lives in someone else's system, your security depends on their practices. Do you really know where your data is being hosted, and is it even in the United States at all?

The compliance burden

Thirteen states now have comprehensive data privacy laws, with eight additional laws taking effect in 2025. Oregon, Delaware, Minnesota, and New Jersey provide no nonprofit exemption. State penalties reach $7,500 per violation. GDPR fines can reach €20 million or 4% of global revenue for organizations with EU operations.

If your vendor can't clearly explain where your data lives, who has access, and how it's protected, you have a compliance problem waiting to surface. These issues can be expensive to resolve once contracts are signed, and in some cases, they can't be resolved at all unless you leave.

Our approach

We invested $40,000 in privately-owned, fully-firewalled physical server infrastructure with redundant encrypted backup solutions that run hourly. Client data stays on the systems we control and nothing touches third-party clouds without explicit client approval. Even our email runs on our own systems with dedicated IP addresses. While we don't host websites and applications (that's too big of an undertaking for us) clients can rest easy knowing that their competitive projects are never going to be compromised.

This isn't paranoia. It's what data sovereignty actually requires. When we tell clients their data is protected, we can show them exactly where it lives, who has access to it, and how it's secured. Not many providers can do that.

The decision framework

The vendors aren't wrong that technology investment matters. Companies with aligned digital capabilities achieve 14% market-cap premiums and grow 75% faster than peers. But the path from vendor pitch to business value requires navigating failure rates that make success the exception rather than the rule. Remember the old saying, "Proper planning prevents poor performance?" back in the day? Well, it's even more relevant now.

Only 0.5% of IT projects meet all three success criteria: on time, on budget, and delivering intended benefits. That's 1 in 200 projects. The companies that beat those odds share common characteristics: they question vendor claims with data, scope projects smaller than ambitious, and measure success by business outcomes rather than technology adoption.

When a vendor promises transformation, ask for evidence. When they cite industry trends, ask who funded the research. When they suggest you're falling behind competitors, ask how they know what your competitors are actually doing versus citing strategic guesswork. Our client conversations are grounded in reality and data, we won't entertain speculation.

The decisions you make this year about AI, technical debt, and vendor relationships will compound for years. Make them based on what the data actually shows, not what someone with a subscription to sell wants you to believe.